Understanding Lambda Concurrency

Table of Contents

Lambda by nature is highly scalable. However there ares some limitations you need to consider when there are lot of Lambda functions run simultaneously.

Please note: This will not applicable for all the scenarios, but for a system with a high throughput.

Account Level Concurrent Execution Limit #

As at now, Lambda has a soft limit of 1000 concurrent executions per region. Which means, at any given moment, sum of lambda executions running belongs to all of your lambda functions in a single region must be less than 1000.

Example:

Let’s say you have 3 Lambda functions in a same region:

A — SQS poller function which has X number of executions running at this very moment. B — Function to update Dynamodb with Kenisis stream which has Y number of executions running at this very moment. C — Function to process some files in s3 which has Z number of executions running at this very moment.

So, X + Y + Z will be equal or less than 1000.

Even the demand is high, no more new executions will spawn until one execution is completed.

Please note: This 1000 limit can be increased by create a case at AWS Support Center under “Service Limit Increase” with your use case.

To understand the rest of calculations easily, will consider this particular region has default 1000 concurrency limit.

Function Level Concurrent Execution Limit #

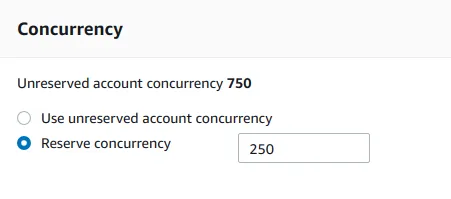

You can reserve concurrency limit per function basis which is called reserved concurrency. The rest of the concurrency out of maximum concurrency allowed per region is called unreserved concurrency.

Example:

- A — Function which has 250 reserved concurrent executions.

- B — Function which has 100 reserved concurrent executions.

- C — Function which has X number of executions running at this very moment.

- D — Function which has Y number of executions running at this very moment.

So, X + Y will be equal or less than 650 (1000 - (250 + 100))

Please note: There must be minimum 100 Unreserved concurrent executions per region. Hence, maximum amount of reserved concurrency across all the functions per region will be 900 (1000–100)

Why we need reserved concurrency? #

-

Reserved concurrency is useful to allocate concurrency for a function which need dedicated priority over the others. As we reserve the concurrency, at a given time, this function can run with this maximum amount of concurrency without interrupting or waiting.

-

Another reason is to handle the load of the other related services special VPC based resources. For example, as below diagram, you have a lambda function which is executed by a sqs queue and it does some data processing and save them to a relational database in RDS which sits in a vpc.

Simply, Elastic Network Interfaces has maximum number of IP addresses depends on the instance type. So it might not be able to save data in the rate of receiving them from lambda, which make a bottle neck at the ENI.

So, we need to calculate the maximum rate ENI can manage concurrency and limit the lambda function’s reserved concurrency accordingly in order to not to overload ENI.

Lambda Concurrency Metrics #

There are two metrics available in CloudWatch for Lambda Concurrency.

- ConcurrentExecutions

This is the sum of concurrent executions across all the function in a region at a given point in time.

- UnreservedConcurrentExecutions

This is the sum of the concurrency of the functions that do not have a custom concurrency limit specified in a region at a given point in time.

👋 I regularly create content on AWS and Serverless, and if you’re interested, feel free to follow / connect with me so you don’t miss out on my latest posts!

- LinkedIn: https://www.linkedin.com/in/pubudusj

- Twitter/X: https://x.com/pubudusj